There is a ghost haunting the halls of modern academia. It is not the ghost of Artificial Intelligence. It is the ghost of a university that no longer exists.

When we discuss the university-wide transformation of our educational programs, the fiercest resistance rarely comes from a place of logic or pedagogical evidence. It comes from nostalgia. As educators and administrators, we cling fiercely to a romanticized vision of higher education: a professor sitting with a handful of brilliant apprentices, exchanging deeply nuanced feedback in a sunlit seminar room.

It is a beautiful image. It is also mathematically impossible.

In an era when we educate cohorts of hundreds or thousands, clinging to this bespoke, artisanal ideal does not protect the “human touch”. It actively prevents it. By treating the traditional, industrial-era model of mass education as sacred, we are blinding ourselves to its profound logistical failures, its hidden inequities, and the mass exhaustion of our faculty.

The “anti-AI” stance is frequently dressed up as a moral high ground. Let us be relentlessly honest: attempting to ban or ignore Generative AI is not a principled defense of rigorous pedagogy. It is an administrative retreat. It is the choice to manage the decline of a broken system rather than do the hard, uncomfortable work of building the 5th Generation University.

To survive the coming decade, we must strip away the romanticism and confront the mathematical reality of modern university cohorts. If we are serious about saving the human element of higher education, we must stop fighting the exact tools designed to liberate it.

Here are the five reasons why embracing Authentic Intelligence is no longer just a technological upgrade, but an urgent, non-negotiable institutional necessity.

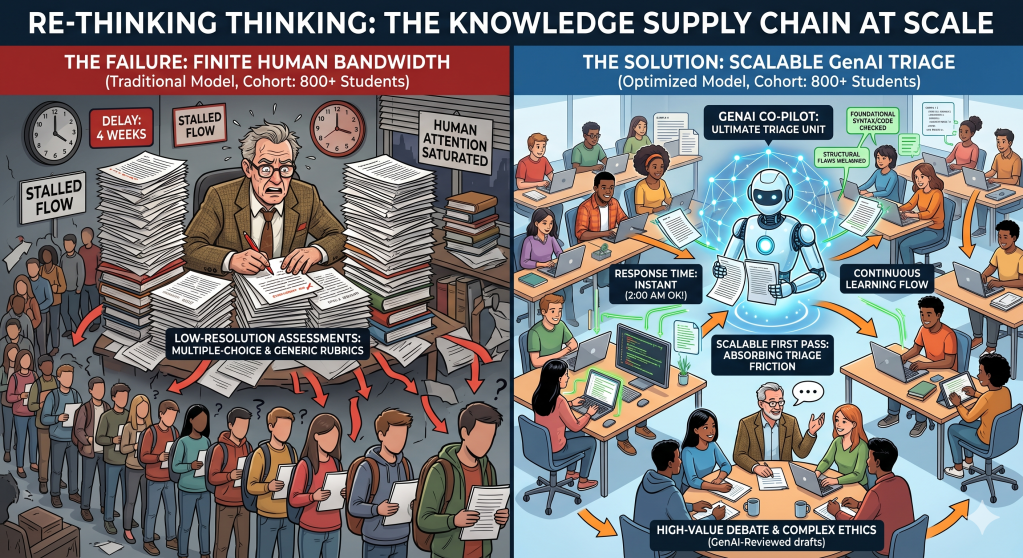

1. The “Human-Only” Assessment Model Does Not Scale (The Supply Chain of Knowledge)

To understand the failure of the current model, we have to view education as a complex logistical network. The traditional ideal, in which a master meticulously reviews an apprentice’s draft, offers highly contextual feedback and engages in deep debate, is a bespoke, artisanal process.

When you scale a bespoke process to accommodate cohorts of 500 or 1,000 students, the supply chain breaks. Human cognitive bandwidth is finite. Because professors physically cannot provide deep, line-by-line feedback to hundreds of students in a timely manner, the system defaults to low-resolution assessments: multiple-choice exams, standardized rubrics, and delayed grading.

GenAI acts as the ultimate triage unit. It is the scalable “first pass” that absorbs the friction from high-volume, low-complexity work. If an AI co-pilot instantly grades foundational syntax, checks baseline code, and highlights structural flaws in a draft at 2:00 AM, the student doesn’t have to wait three weeks for a professor’s red pen. By offloading this bottleneck to the machine, the flow of learning becomes continuous, rather than stalling out while waiting for finite human attention.

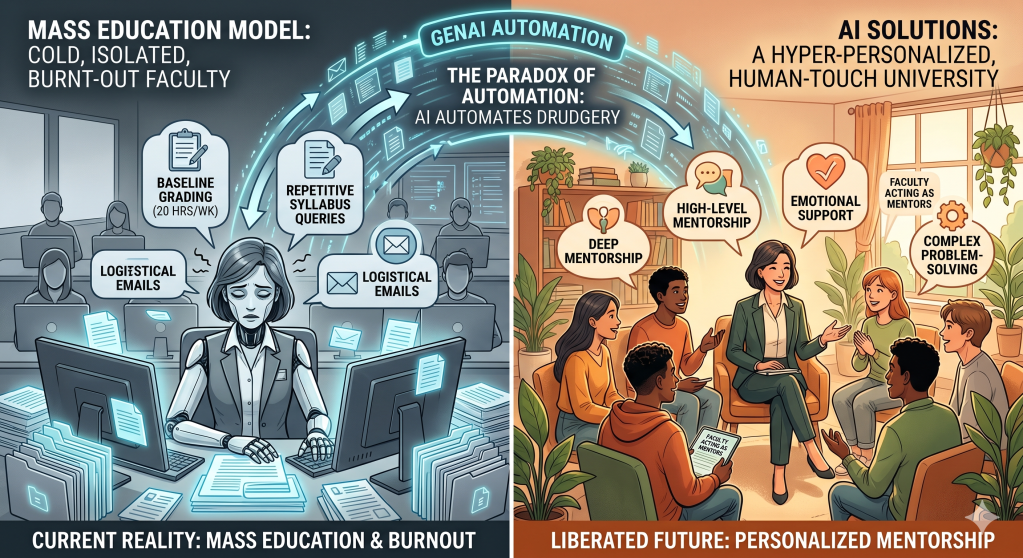

2. The Paradox of Automation: AI Enables More Human Interaction

There is a reflexive fear that injecting algorithms into the classroom will create a cold, dystopian, and isolated environment. But we must be honest about the present: the mass-education model has already removed the human touch.

Faculty are burning out under the weight of administrative friction and grading overload. If an educator spends twenty hours a week diagnosing the exact same basic conceptual misunderstandings across a massive cohort, they have zero energy left for deep, intellectual mentorship.

This is the paradox of automation: to make a large university feel hyper-personalized, you must automate the mechanical aspects of teaching. By deploying GenAI to handle the “drudgery”, the syllabus queries, the baseline grading, and the repetitive logistical emails, we liberate the faculty’s schedule. That reclaimed time is then reinvested directly into the human. We stop acting as syntax-checkers and start acting as mentors, focusing our interactions on high-level debate, emotional support, and complex problem-solving.

3. Banning AI Creates a Dangerous “Shadow Economy”

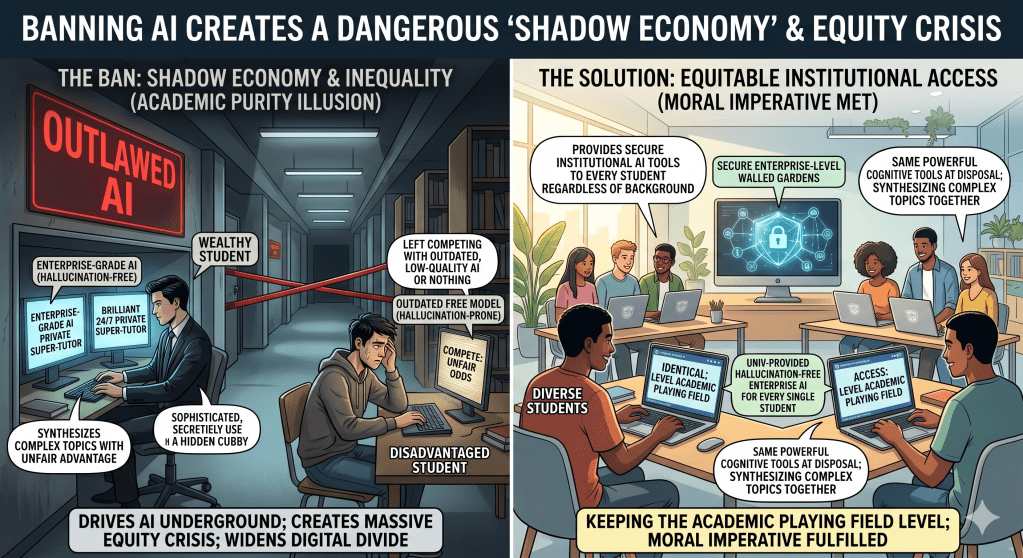

The belief that an institution can successfully “ban” AI is an illusion. Refusing to integrate AI into the curriculum does not preserve academic purity; it merely drives the technology underground and creates a massive equity crisis.

We are facing a new kind of digital divide. If the university simply outlaws these tools, wealthy students will quietly purchase enterprise-grade, hallucination-free models. They will have access to a brilliant, 24/7 private “super-tutor” that helps them synthesize complex topics. Meanwhile, disadvantaged students will be left trying to compete using outdated, hallucination-prone free models, or nothing at all.

Embracing AI is a moral imperative. It means the university steps up to provide equitable, institutional access—creating secure, enterprise-level walled gardens. This ensures that every single student, regardless of their socioeconomic background, has the exact same powerful cognitive tools at their disposal, keeping the academic playing field level.

4. “AI-Blindness” is Academic Malpractice

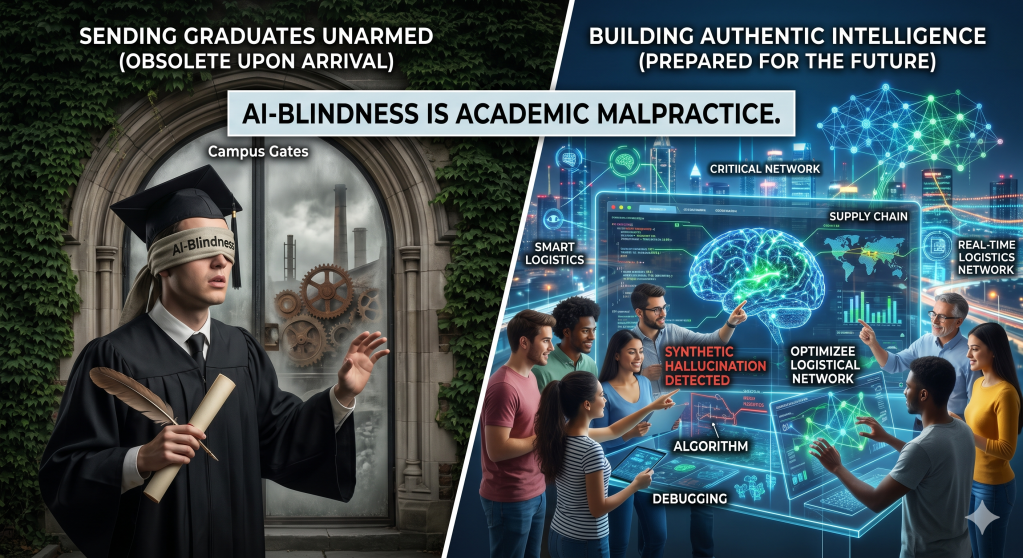

We are preparing students for a world that is fundamentally rewiring itself. In highly complex, data-heavy fields, algorithms are already predicting supply chain disruptions, optimizing routes in real-time, and managing massive physical networks.

If we graduate students who have been artificially shielded from these technologies in the classroom, we are sending them into the professional arena totally unarmed. They will be obsolete upon arrival.

Our mandate is not just to teach the theory of a discipline, but to build “Authentic Intelligence.” We must teach students how to ethically interrogate algorithms, how to spot a synthetic hallucination masquerading as a fact, and how to command AI as an optimization tool for massive, multi-variable problems. We cannot foster digital literacy by pretending the digital world stops at the campus gates.

5. Moving from “Information Retrieval” to “Judgment”

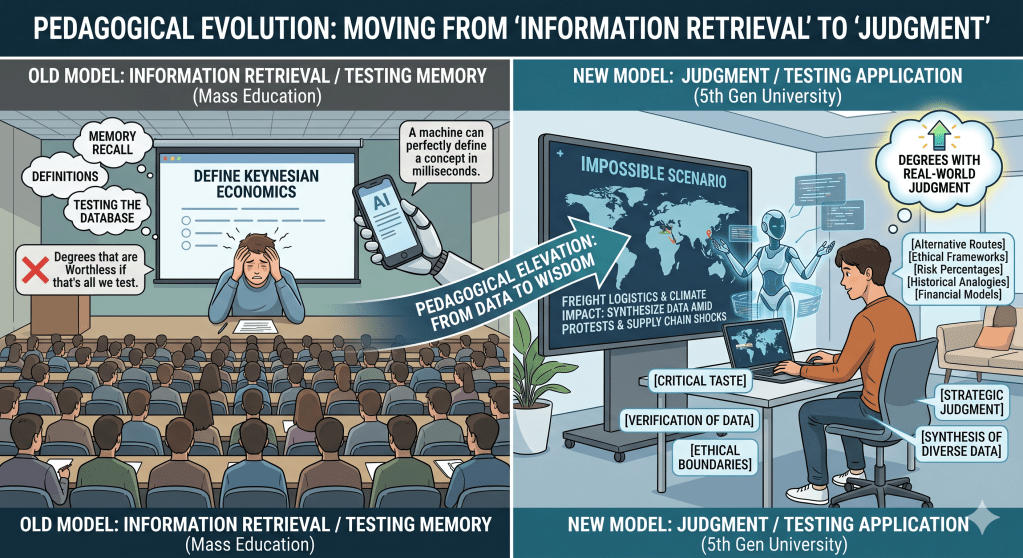

The most profound shift AI forces upon the university is the elevation of our pedagogical standards. Because large classes are so difficult to assess, we have historically defaulted to testing memory recall—asking students what a framework is, or when an event happened.

GenAI has fully commoditized information retrieval. A machine can perfectly define a concept in milliseconds. If that is all we are testing, our degrees are worthless.

Embracing AI forces the curriculum to evolve. We must stop testing students on whether they can memorize a theory, and start testing them on how they apply that theory to an impossible scenario using an AI co-pilot. We shift from asking them to generate “average” content to requiring them to synthesize diverse data points. We move from testing memory to testing the uniquely human traits that algorithms lack: critical taste, verification, ethical boundaries, and strategic judgment.